Tightly-Coupled LiDAR–Inertial Odometry with Geometric-Uncertainty Modeling

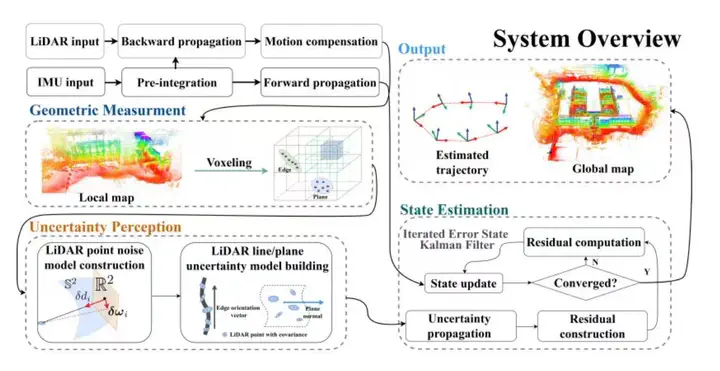

System overview of LIO-GUM. The system mainly contains geometric-uncertainty model construction, geometric residual computation, and state estimation.

System overview of LIO-GUM. The system mainly contains geometric-uncertainty model construction, geometric residual computation, and state estimation.

Abstract

Real-time and accurate state estimation and map reconstruction are crucial for unmanned systems. However, existing LiDAR-inertial-visual odometry (LIVO) methods typically rely on short-term data association, making it difficult to maintain stable operation in LiDAR or visually degenerated environments. In this work, we present Voxel-LIVO, a precise and robust LIVO and mapping system that leverages a unified adaptive voxel map for short-term, mid-term, and long-term data associations. For LIVO, we employ an iterated error-state Kalman filter (IESKF) to fuse LiDAR, inertial, and visual measurements for efficient state estimation. To enhance the precision of image alignment, we propose a LiDAR-map-assisted visual patch association (LM-VPA) method, which employs LiDAR planar features to perform affine transformations for image patches. For local mapping, we propose a novel sequential LiDAR-visual local bundle adjustment (BA) approach, which facilitates mid-term data association to further enhance the precision of the local map and mitigate state drift. To maintain accuracy while minimizing memory overhead, we propose a hybrid map-management scheme that combines a keyframe-based sparse long-term voxel map with a densely updated sliding-window voxel map. We conducted extensive experiments on public benchmark datasets and our private datasets, and the results demonstrate that our proposed system significantly outperforms other state-of-the-art odometry systems in terms of accuracy and robustness, particularly under highly degenerated environments (see attached video).